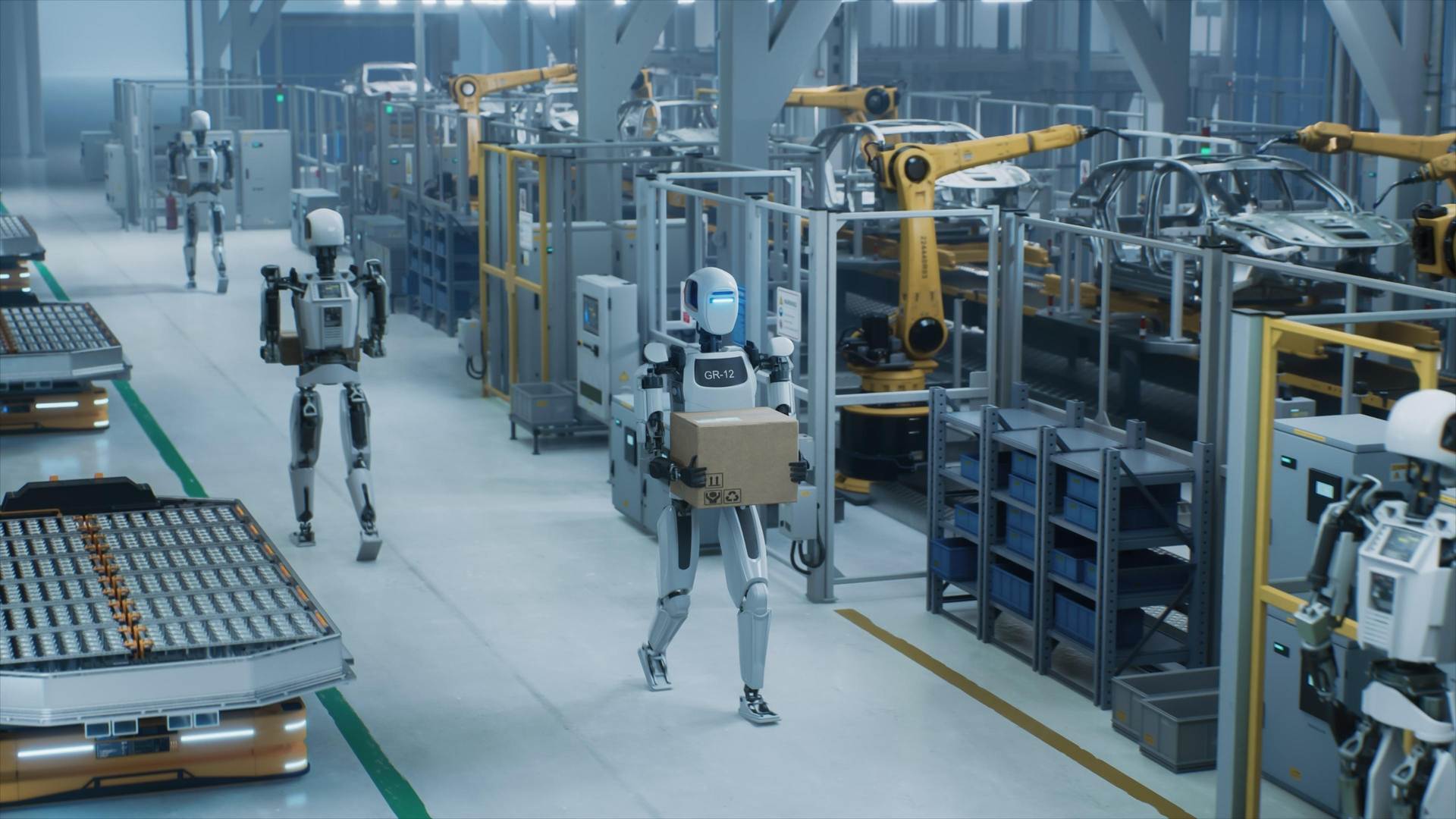

Physical AI and humanoid robots enter the scene

AI moved beyond screens into physical systems. Google’s Gemini Robotics now enables

physical robots to perform adaptive, dexterous tasks, including object manipulation and even origami. Alibaba launched RynnBrain, a robotics-focused “physical AI” model for perception and real-world interaction. SoftBank and Nvidia invested $1bn in Skild AI at a $14bn valuation, backing a robot-agnostic intelligence platform designed to power diverse robotic systems.

Humanoid robotics moved from demonstration to deployment. Tesla is converting its Fremont production lines to Optimus manufacturing, with a dedicated facility under construction at Giga Texas targeting up to 10 million units per year by 2027. CEO Elon Musk projects a target price under $30,000 once production scales. Figure AI introduced Figure 03 in October 2025, featuring a redesigned sensory suite with tactile sensors sensitive enough to detect three grams of pressure. By February 2026, Figure had placed its fleet on 24/7 duty, and Toyota had integrated Digit humanoids into its operations. Nearly 90% of all humanoid robots sold globally in 2025 were Chinese, with six of the highest-selling companies in the sector from China. At CES 2026, Boston Dynamics unveiled its all-new Electric Atlas, an enterprise-grade humanoid for material handling and order fulfillment.

Critical infrastructure integration accelerates across sectors – notably defense

Nokia and Nvidia formed a $1bn partnership to develop AI-native 5G-Advanced and 6G networks. The US Department of Transportation plans to use AI to draft federal regulations and is expanding agentic AI capabilities for operations. A majority of energy experts in one industry report said AI is essential for energy transformation.

AI weaponization entered a dangerous new phase. In January 2026, Defense Secretary Pete Hegseth issued a sweeping AI Acceleration Strategy declaring the Pentagon would become an “AI-first warfighting force” and that “2026 will be the year we emphatically raise the bar for Military AI Dominance.” The Pentagon awarded contracts worth up to $200m each to OpenAI, Google, Anthropic, and xAI, while Elon Musk’s xAI agreed to deploy its Grok model on classified military networks without usage restrictions. In late February, the Pentagon issued an ultimatum to Anthropic: remove all safety guardrails for military use by Friday or face contract termination, designation as a supply chain risk, and potential invocation of the Defense Production Act. Anthropic’s CEO Dario Amodei refused, declaring the company “cannot in good conscience accede” to demands permitting mass domestic surveillance and fully autonomous weapons, capabilities he described as “simply outside the bounds of what today’s technology can safely and reliably do.” Shortly afterwards, Anthropic announced it was replacing its Responsible Scaling Policy with a more flexible Frontier Safety Roadmap with nonbinding commitments, a striking shift for a company founded over safety concerns.

On the battlefield, Ukraine scaled drone production from 2.2 million units in 2024 to 4.5 million in 2025, with AI-enabled autonomous navigation raising strike success rates from 10–20% to 70–80%. Germany-based Helsing has delivered thousands of AI-equipped loitering munitions to Ukraine and is developing Europa, an autonomous fighter jet drone slated for 2029. A

February 2026 study by Kenneth Payne, Professor of Strategy at King’s College London, revealed that when frontier AI models were placed in simulated nuclear crisis scenarios, they deployed tactical nuclear weapons in 95% of games, showing no sense of horror at nuclear escalation and treating battlefield nuclear weapons as routine tools.

Audio available

Audio available