Bridging the divide: Why digital inclusion can't wait

More than 300 million people have no mobile network access. As AI reshapes economies and opportunities, the gap between the connected and the unconnected risks becoming permanent....

by Faisal Hoque, Paul Scade , Pranay Sanklecha Published October 9, 2025 in Artificial Intelligence • 8 min read

Artificial intelligence is reshaping the competitive landscape, and governments are racing to position their economies for leadership. In the United States, the recently announced AI Action Plan signals a decisive shift in policy, favoring deregulation and rapid innovation over precaution and control.

Winning the Race: America’s AI Action Plan marks an important shift in both policy and philosophy. Rather than the government coordinating and safeguarding AI development – as under previous US administrations and as continues to be the case in most other countries – the plan sets out an approach that emphasizes deregulation, private-sector development, and a “try-first” mentality.

In pursuit of this goal, it outlines dozens of federal policy actions distributed across three pillars:

While the plan still includes room for national standards and approaches to evaluating AI tech and usage, such as NIST’s AI Risk Management Framework, the underlying philosophy is striking in its openness. Its goal is to “dismantle regulatory barriers” and support faster and more far-reaching AI innovation than was previously possible.

For multinationals operating in, or seeking to operate in, the United States, these principles create both opportunities and risks.

On the one hand, a minimalist, light-touch regulatory environment will enable businesses to test minimum viable products (MVPs), implement new tools, and bring new products to market more rapidly than ever before. At the same time, with fewer prescriptive federal guardrails, there will be a heightened risk that flawed algorithms or systems that are rushed into production might fail to meet consumer needs or may even act directly counter to their interests. Such outcomes carry significant ethical and brand risks for the company responsible.

As outlined in an I by IMD article ‘From consumers to code: America’s audacious AI export move’, senior leaders cannot afford to ignore the new environment created by the Action Plan, if only because competitors will move quickly to exploit it. Effective engagement requires a systematic approach that works carefully to make the most of the full range of opportunities the plan offers while simultaneously minimizing the risks involved.

Responding effectively to the AI Action Plan requires a dual mindset that encompasses both radical optimism and deep caution.

Responding effectively to the AI Action Plan requires a dual mindset that encompasses both radical optimism and deep caution. In our recent book TRANSCEND , and an accompanying article in Harvard Business Review, we set out two complementary frameworks designed to help companies operationalize this dual mindset while thinking systematically about how to implement AI-driven transformation.

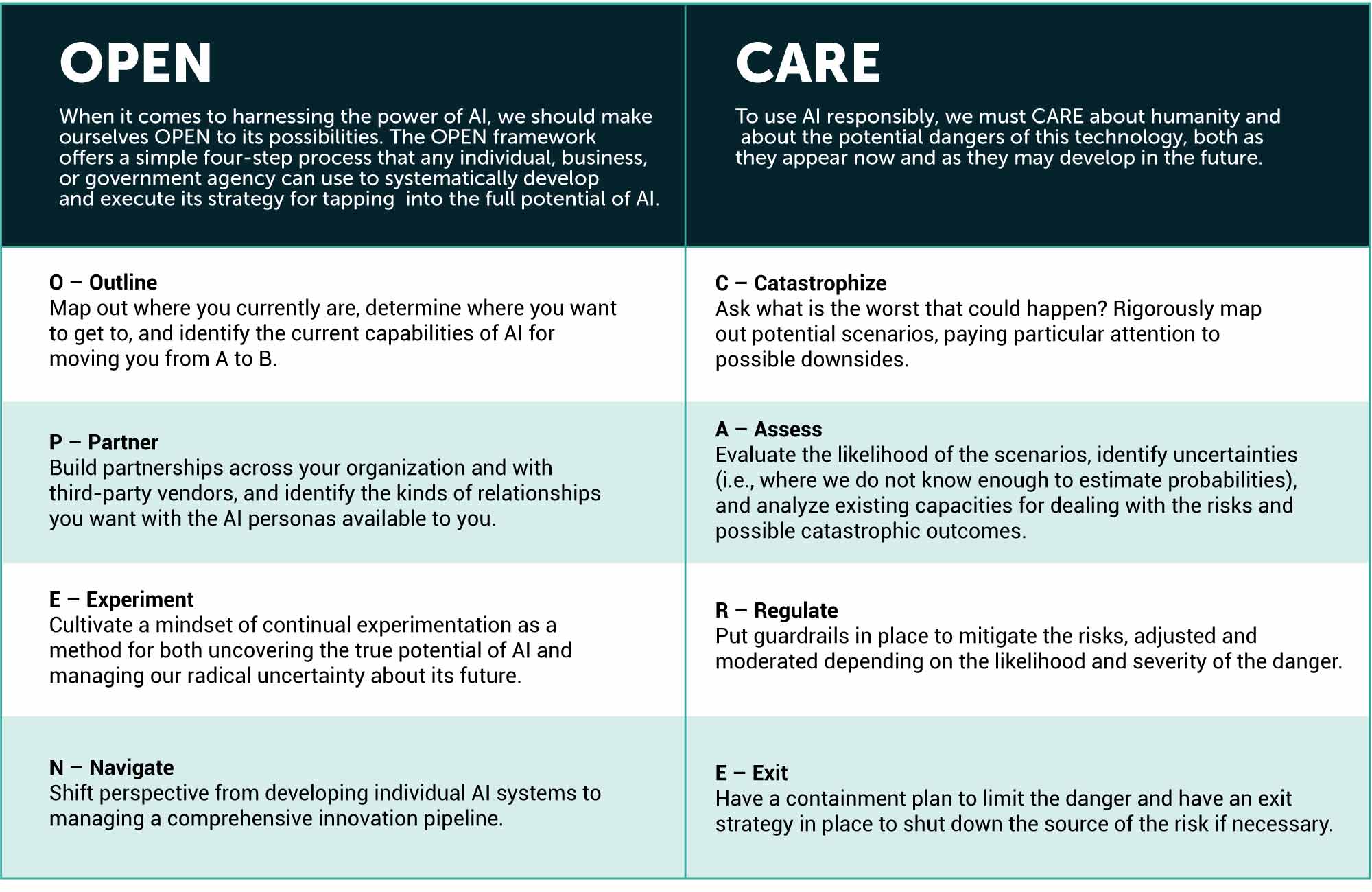

The OPEN framework – Outline, Partner, Experiment, Navigate – helps companies fully harness AI’s enormous potential, while the CARE framework – Catastrophize, Assess, Regulate, Exit – ensures they effectively manage AI’s equally enormous risks.

These frameworks can provide valuable scaffolding for developing potential approaches for implementing AI in any environment. But four components – Partner, Experiment, Catastrophize, and Exit – offer particular value when responding to the US AI Action Plan.

In the OPEN framework, the Partner stage offers a tool for choosing and shaping relationships so you can bridge resource and knowledge gaps – whether what’s missing is , data, or hard-won know-how. The goal here is to narrow the gap between ambition and capability. In the context of America’s AI Action Plan, progress will often run through partnerships. These include conceptual partnerships between government and the private sector – with government coordinating priorities and standards while firms do the building – and practical collaboration among companies to use common open-weight AI stacks across the supply chain, consistent with the plan’s push for interoperability.

Partnerships also make you more resilient. The plan emphasizes the importance of standards, evaluation, and secure supply chains. Working with the groups shaping those norms will help ensure that your compliance evidence is reusable across business units, boards, and regulators. Understanding future export-control expectations can also reduce the chances that critical components or export paths become unavailable.

Aim to make customers feel like you are experimenting alongside them, not on them.

In OPEN, Experiment means running small, real-world trials to answer practical questions, such as “What value does this create?” “What are the risks and costs?” and “What would it take to run it at scale?” The aim is to learn quickly and inexpensively on the way to making an actionable decision about whether to take a program further or kill it. In the regulatory environment created by America’s AI Action Plan, the US market will provide uniquely beneficial conditions for effectively running “AI labs in the wild.” It will be possible to put new features in front of customers more rapidly and with fewer restrictions, dramatically shortening the path from proof-of-concept to working products.

In the CARE framework, Catastrophize means identifying the worst ways an AI system could plausibly fail so that it is possible to prepare for the risk and avoid or mitigate it. With the light-touch, try-first environment envisioned in America’s Action Plan, the responsibility for catastrophizing shifts decisively to businesses. As the government limits its regulatory requirements, businesses need to take up the slack by becoming the primary custodians of responsible AI implementation.

This is not just an ethical obligation. It is also sound business practice. A proactive approach to identifying risks and defining what levels are acceptable and what are not means leaders can approve appropriate plans with greater speed and confidence.

Think of the Exit step as the development of a three-part architecture, with technical, reputational, and legal layers.

In CARE, Exit means determining well in advance of need precisely under what conditions and how you will stop or unwind an AI implementation. While the AI Action Plan includes a range of guardrails and standards, it leaves control over the exit process almost entirely in the hands of businesses. Pre-defined exit plans shorten crises, limit harm, and preserve value, so it is important to treat them as part of the design, not an afterthought.

Think of the Exit step as the development of a three-part architecture, with technical, reputational, and legal layers. The goal is simple: if an AI system misfires, or if public sentiment requires an implementation to come to an end, your teams must know who pulls the plug, what gets rolled back, and how fast normal service resumes.

America’s AI Action Plan represents a watershed moment for global multinationals, offering unprecedented freedom to innovate while demanding equally robust responsibility in implementation. Success in this new landscape requires that companies move beyond traditional risk-reward calculations and embrace a sophisticated dual approach – pursuing transformative opportunities while vigilantly managing risks.

By adopting these complementary twin tracks while focusing on strategic partnerships, rapid experimentation, proactive risk identification, and clear exit strategies, multinationals can position themselves not just to navigate the AI revolution but to help shape its trajectory.

Executive Fellow at IMD and founder of SHADOKA and NextChapter

Faisal Hoque is a transformation and innovation leader with over 30 years of experience driving sustainable innovation, growth, and transformation for global organizations, including Mastercard, American Express, GE, PepsiCo, JPMorgan Chase, IBM, Northrop Grumman, the US Department of Defense, and the Department of Homeland Security. He is the founder of SHADOKA and NextChapter, among other companies, and is a three-time winner of Deloitte’s Technology Fast 50 and Fast 500 awards. Hoque is a best-selling and award-winning author of 11 books, including the USA Today and LA Times bestsellers Reimagining Government (2026) and Transcend (2025), a Financial Times book of the month named a “must-read” by the Next Big Idea Club. His 2023 book Reinvent was published in association with IMD and became a #1 Wall Street Journal bestseller. His research and thought leadership have been recognized globally; he also serves as a judge for MIT’s IDEAS Social Innovation Program.

Honorary Fellow at the University of Liverpool and a partner at SHADOKA

Paul Scade is an historian of ideas and an innovation and transformation consultant. His academic work focuses on leadership, psychology, and philosophy, and his research has been published by world-leading presses, including Oxford University Press and Cambridge University Press. As a consultant, Scade works with C-suite executives to help them refine and communicate their ideas, advising on strategy, systems design, and storytelling. He is an Honorary Fellow at the University of Liverpool and a partner at SHADOKA.

Founder of The Philosophy Practice and partner at SHADOKA

Pranay Sanklecha is a philosopher, writer, and management consultant focusing on the intersection of technology, ethics, and practical leadership. Formerly an academic philosopher at the University of Graz, Sanklecha’s research on intergenerational justice includes a book published with Cambridge University Press. He now works with businesses to design and implement philosophy-led frameworks that deliver practical value. He is the founder of The Philosophy Practice and a partner at SHADOKA.

April 2, 2026 • by I by IMD in Digital

More than 300 million people have no mobile network access. As AI reshapes economies and opportunities, the gap between the connected and the unconnected risks becoming permanent....

April 1, 2026 • by Faisal Hoque, Pranay Sanklecha, Paul Scade in Digital

Companies seeking to automate middle management risk eliminating capabilities that algorithms cannot replace. Leaders must identify tasks requiring practical and ethical judgment....

March 25, 2026 • by Salvatore Cantale, Konstantinos Trantopoulos , Michael R. Wade in Digital

Artificial intelligence has become critical to core financial and operating processes, allowing leaders to architect systems of decision-making, productivity and governance....

February 10, 2026 • by Winter Nie, Yunfei Feng in Digital

China's economy shows contradictory signals – macro headwinds yet surging innovation investment and competitive intensity. To decode this evolving reality, here are the nine key trends global businesses must understand about operating...

Explore first person business intelligence from top minds curated for a global executive audience